Anyone who’s spent time in online multiplayer games knows the sting of toxic behavior. You’re mid match, focused on strategy, and suddenly your chat erupts with slurs, harassment, or threats that completely derail the experience. I’ve been covering gaming industry developments for over a decade, and I’ve watched this problem grow from occasional annoyance to genuine crisis. What’s changed recently? The sophisticated deployment of artificial intelligence to detect and manage toxic players before they poison entire communities.

The Scope of Toxicity in Gaming

Let’s be honest about the numbers here. According to the Anti Defamation League’s annual survey, roughly 83% of adult online gamers experience some form of harassment during gameplay. That statistic has barely budged in five years despite countless “zero tolerance” policies from game developers.

Traditional moderation simply cannot keep pace. When League of Legends processes millions of matches daily, no human team can review even a fraction of player interactions. This is where machine learning stepped in not as a silver bullet, but as a desperately needed force multiplier.

How AI Detection Systems Actually Work

The technology behind toxic behavior detection isn’t magic, though it sometimes feels like it when you see bans happening within minutes of an offense.

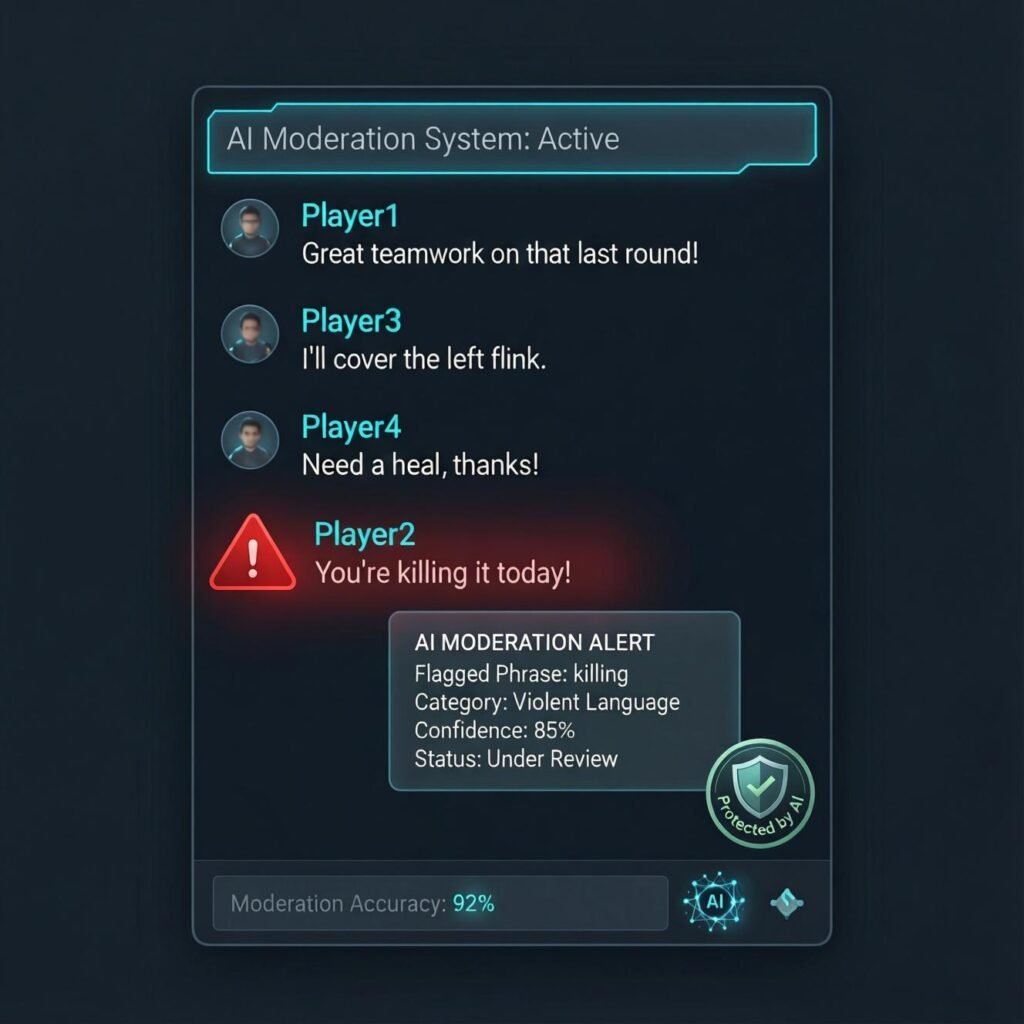

Modern systems operate on multiple layers. Text based analysis represents the most mature technology. Natural language processing models scan in game chat and voice transcripts, identifying not just banned words but contextual meaning. Someone typing “you’re killing it” reads very differently from “I’ll kill you.” These systems parse sentiment, intent, and patterns that would exhaust human moderators.

Voice detection has advanced remarkably in recent years. Companies like Modulate have developed real-time voice analysis that can flag harassment, hate speech, and threatening language during live gameplay. The accuracy isn’t perfect, but it’s impressive enough that major studios have started licensing the technology.

Behavioral pattern recognition adds another dimension. AI monitors gameplay actions intentional team killing, griefing, exploitation of game mechanics to harass others. When I spoke with a developer at Ubisoft last year, they explained how their Rainbow Six Siege system tracks player movement and actions to identify deliberate teamkill patterns versus accidental friendly fire. The distinction matters enormously for fair enforcement.

Real World Implementation and Results

Riot Games deserves credit for pioneering large scale AI moderation with their “tribunal” evolution. What started as community driven review transformed into machine learning systems that now issue most penalties automatically. Their data shows meaningful reduction in repeat offenses, though they’re careful not to claim complete victory.

Xbox’s reputation system combines player reports with AI analysis to create behavioral scores. Players with consistently poor scores find themselves matched primarily with similar players essentially quarantining toxic behavior. Microsoft reports that this approach reduced enforcement actions by 25% simply by creating social consequences.

Activision’s “ToxMod” implementation in Call of Duty marked a significant step for voice detection at scale. The system processes billions of voice minutes monthly, flagging potential violations for review. Initial rollout showed 50% reduction in repeat voice harassment offenses.

The Limitations Nobody Wants to Discuss

Here’s where I need to temper the optimism. These systems aren’t infallible, and the gaming industry sometimes oversells their capabilities.

False positives remain a genuine problem. Competitive banter, sarcasm between friends, and cultural communication differences all create detection challenges. I’ve personally witnessed players receive temporary bans for clearly joking exchanges with longtime squad mates. The AI couldn’t understand the relationship context.

Sophisticated bad actors adapt quickly. Within weeks of new detection systems launching, communities share workarounds. Creative misspellings, coded language, and dogwhistle terms evolve faster than training data can accommodate. It becomes an arms race between detection algorithms and people determined to behave badly.

The voice detection technology struggles with accents, dialects, and non-English languages. Most systems train primarily on American English, creating enforcement disparities that raise legitimate fairness concerns.

Ethical Considerations in Automated Enforcement

Privacy implications cannot be ignored. When AI monitors every chat message and voice interaction, it creates surveillance systems that some players find uncomfortable regardless of beneficial intent. Where does that data go? How long is it retained? These questions deserve transparent answers.

Proportionality in punishment requires human judgment that AI lacks. A 14 year old having a bad day and typing something regrettable differs fundamentally from a serial harasser on their twentieth offense. Current systems often apply identical penalties, missing the nuance that experienced moderators naturally understand.

Cultural context presents ongoing challenges. What constitutes toxic behavior varies significantly across regions. Trash talking traditions in competitive gaming differ between North America, Europe, and Asia. Training globally consistent systems while respecting cultural differences remains unsolved.

Where This Technology Is Heading

The next generation of detection systems will likely incorporate multimodal analysis combining text, voice, video, and behavioral signals simultaneously. This holistic approach should improve accuracy while reducing false positives.

Predictive intervention represents a fascinating frontier. Rather than punishing toxic behavior after it occurs, some developers are testing systems that identify at-risk situations and intervene proactively. Imagine receiving a gentle prompt suggesting a break when gameplay patterns indicate mounting frustration.

The industry is also exploring “reform pathways” where AI helps toxic players understand their behavior patterns and offers structured rehabilitation rather than permanent exclusion. Early data suggests this approach reduces recidivism more effectively than simple punishment.

The Bottom Line

AI-powered toxic behavior detection has genuinely improved online gaming environments. The technology isn’t perfect, and we should resist treating it as a complete solution. Human oversight remains essential for complex cases. But as someone who remembers gaming before any moderation existed, the progress is undeniable. The goal isn’t creating sterile, fun-free environments it’s ensuring that everyone can enjoy competitive gaming without enduring abuse. That balance takes constant refinement, but we’re closer than ever.

Frequently Asked Questions

How does AI detect toxic behavior in games?

AI uses natural language processing, voice analysis, and behavioral pattern recognition to identify harassment, hate speech, and disruptive gameplay in real-time.

Can AI detect sarcasm or jokes between friends?

Not reliably. Current systems struggle with context, relationship dynamics, and cultural nuances, leading to occasional false positives.

Which games use AI moderation?

Major titles including League of Legends, Call of Duty, Rainbow Six Siege, and Xbox platform-wide employ AI detection systems.

Is my voice chat being recorded for AI analysis?

Many games now analyze voice chat in real time, though policies on data retention vary by platform and publisher.

Can players appeal AI issued bans?

Most platforms offer appeal processes, though response times and success rates vary significantly between companies.

Does AI moderation actually reduce toxic behavior?

Industry data suggests meaningful reduction in repeat offenses, though complete elimination remains possible.